A/B testing, and content experimentation, in general, is one of the most powerful levers in product-level advertising, but it is often neglected. From a survey that we recently conducted in partnership with IMRG, almost 88% […]

A/B testing, and content experimentation, in general, is one of the most powerful levers in product-level advertising, but it is often neglected. From a survey that we recently conducted in partnership with IMRG, almost 88% of retailers and brands in the UK don’t run tests, mostly because they don’t have time (44%), or they think it is technically impossible (27.5%).

Our Content Experimentation module was built to easily run tests on product level ad content, to scientifically prove what does and doesn’t work.

Celebrating its fifth birthday, our Content Experimentation module was recognised by three awards in 2021: Retail Systems Awards 2021, Global Business Excellence Awards 2021, and UK Business Tech Awards 2021.

The module helps brands and retailers to easily launch experiments on product titles, product types and product images.

Once your feed is high quality, testing methods like A/B testing help try out ideas and hypothesis and determine successful variations that boost impressions, clicks, sales. Surfacing products and engaging with shoppers on shopping sites (Google Shopping), marketplaces (eBay), paid social channels (Facebook) and more, becomes more cost-effective, with better ROAS. And e-commerce teams gain the confidence, knowing that each optimisation they’ll make, works!

With our Content Experimentation Module, by optimising and testing their feeds, our clients achieved an average of

- 79% increase in impressions

- 109% increase in clicks

- 16.7% improved conversion rate

in the last 12 months. (Source: Internal Data).

CONTENT EXPERIMENTATION IS KEY TO DRIVING INCREMENTAL GROWTH

Once you’ve optimised your feed to be attribute-rich, complete and channel-specific, the only lever of growth is making your product attributes relevant to your buyer intent. You can make this happen by testing variations.

With our module you can run real A/B and multivariate tests (MVT).

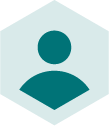

A/B TESTING

A/B testing is used to test the performance of two variants against each other. As an example, you could test adding an attribute to the title (colour, size, brand, etc) or normalise a marketing name (emerald jumper vs green jumper). After a defined timeframe (normally 30 days), you will compare performance of the two variants and make informed decisions on which to keep.

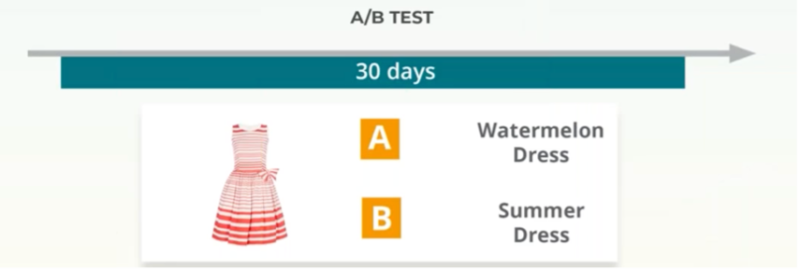

We recommend you run real A/B tests, as opposed to Before & After Test.

A before-after test compares two variants in two different time windows. While powerful, this type of test is time-biased.

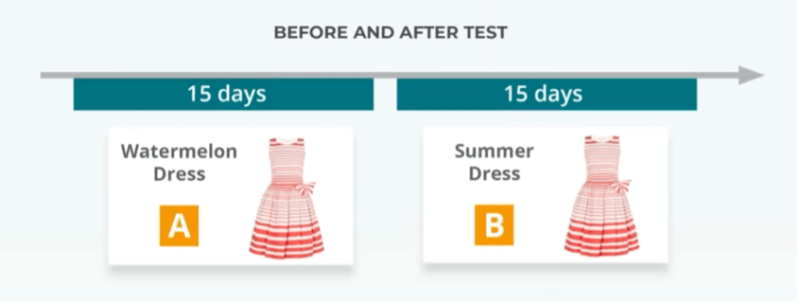

MULTIVARIATE TESTING

If it’s a variation of more than one variable you want to test, we make it easy for you to determine the best performing one.

PRIORITISE WHAT TO TEST

It’s exciting to test but picking products at random isn’t an effective strategy that will give brands and retailers leverage against competitors. E-commerce requires your digital marketing team to quickly adapt your fluctuating ads to changing trends and customer behaviour. Here’s where your Google Ads performance data comes in.

We suggest starting from invisible products – those products that receive low to zero impressions.

To do that, you’ve got to match your Google Ads performance data with your product data.

Behold! Our Data Connector is there to help. With the Data Connector, you can combine your performance metrics with your product data and uncover growth opportunities.

The benefit? Easily test and roll out the optimisation to turn these into best sellers, increasing their impressions, clicks and sales.

Customers using the Data Connector found the ability to identify products and take action at scale saves time, improves product visibility and increases ROI.

“We already knew that 56% of our products on Google Shopping were delivering 0 impressions, but by using the Data Connector to apply dynamic custom labels to these items on a daily basis, we were able to generate incremental revenue through a fully automated strategy which now focusses on giving these lines the exposure they need to grow."

Kieran Stott, Paid Media & Marketing Manager, Roman Originals

FATFACE ACHIEVED +24% ROAS BY A/B TESTING THEIR PRODUCT FEEDS

FatFace had a clear goal of increasing their return on ad spend and make their campaigns more profitable. They quickly understood that, after applying basic optimisation, the only way to grow was to test variations of product attributes.

Some tests they focused on were:

- Removing Marketing names from Product Titles

- Identifying low performing product and adding relevant attributes to Product Titles

- Adding relevant terms to Product Types

By doing so, they achieved +22% impressions, +17% Clicks, +19% Orders, +24% ROAS.

We sat down with them and discussed how they used A/B testing and our module to drive performance.

You can watch the full FatFace webinar to see exactly what tests they are running, or grab your copy of FatFace Case Study.

WANT TO SEE WHY OUR MODULE KEEPS WINNING AWARDS?

You’ve read about it. You’ve seen its awards. How about actually getting to see it? Book a demo and one of our experts will be in touch with you!

Experimentation can nearly double your impressions and clicks?

Get the right experimentation tool!

Book a free demo

River Island

River Island